Are you ready to upskill yourself to the extensively demanded Engineering role of the modern decade? To be able to acquire data from anywhere and in any format is a skill to learn and worth investing your energy. Organizations today are finding it difficult to find talent who can Extract, Transform, Aggregate, Load and Maintain desperate forms of data (Structured, Semi-Structured, Un-Structured) yet be able handle the volume. The era of traditional ETL is slowly fading off and it’s time to build skillset which is platform or tech independent. Open-source yet powerful platforms and programming languages are the winning technologies in the industry these days.

To become a Data Engineer, you will need to be skilled enough with Programming, Version Control Systems, Big Data Tools, Cloud stack, Databases, Data warehouses, Data Structures, Schema Designs, Automations, Dockers&Maintenance. More importantly the context of the downstream data consumers, without which there is no value a Data Engineer can bring to the table. “Business Intelligence Engineer” is the newly emerging career which is an amalgamation of “Data Engineering”&“Data Analytics”.

Data Engineer

Data Engineering is a career to build with focused and well guided content. Without real hands on and placement support, learning is incomplete. You will be navigated through the step-by-step courses by their importance and complexities in building the career.

Here is the roadmap to kickstart your learning right from the moment.

Step: 1

Coding has become mandate in almost all the roles in the Software Industry. Whether you Develop Tools, Automate jobs

Step: 2

You are all set to begin your learning path with this first stepping stone and foundational Essential course.

Step: 3

Ramp up your essential knowledge into market ready skills with this Big Data Engineering Booster course.

We have some awesome videos on our YouTube Channel with some use cases, tricky problems, demos of the courses and many more. Jump over to play through them.

Related Posts

- myadmin

- Data Analytics

- 1 Comments

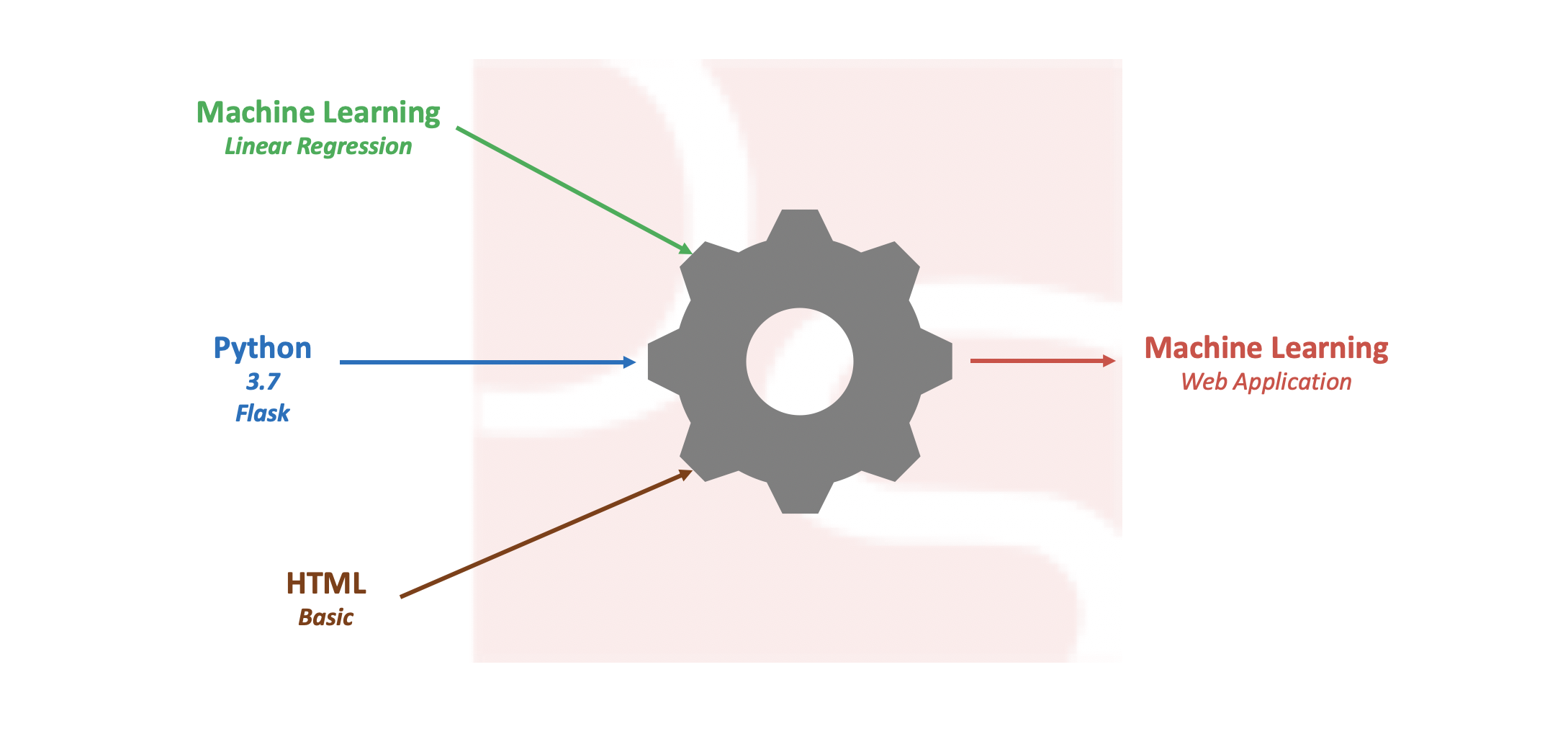

Build Simple Machine Learning Web Application using Python

Pre-processing data and developing efficient model on a given data set is one of the daily tasks of machine learning engineer with commonly used languages like Python or R. Not every machine learning engineer would get a chance or requirement to integrate the model into real time applications like web or mobile for end users […]

- myadmin

- Data Analytics

- 0 Comments

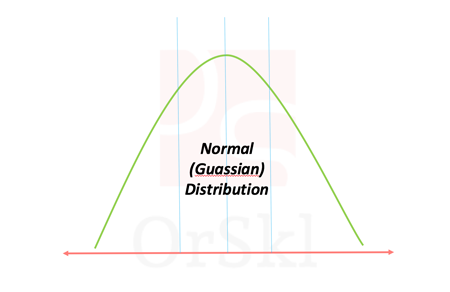

Ways to identify if data is Normally Distributed

Normal distribution also known as Gaussian distribution is one of the core probabilistic models to data scientists. Naturally occurring high volume data is approximated to follow normal distribution. According to Central limit theorem, large volume of data with multiple independent variables also assumed to follow normal distribution irrespective of their individual distributions. In reality we […]

- myadmin

- Data Analytics

- 0 Comments

Will highly correlated variables impact Linear Regression?

Linear regression is one of the basic and widely used machine learning algorithms in the area of data science and analytics for predictions. Data scientists will deal with huge dimensions of data and it will be quite challenging to build simplest linear model possible. In this process of building the model there are possibilities of […]

- myadmin

- General topics

- 14 Comments

Will Oracle 18c impact DBA roles in the market?

There has been a serious concern in the market with announcement of Oracle Autonomous database 18c release. Should this be considered as a threat to Oracle DBA’s roles in the market? Let us gather facts available on the Oracle web to understand what exactly this is going to be and focus on skill improvements accordingly. […]

- myadmin

- General topics

- 4 Comments

How Oracle database does instance recovery after failures?

INSTANCE RECOVERY – Oracle database have inherit feature of recovering from instance failures automatically. SMON is the background process which plays a key role in making this possible. Though this is an automatic process that runs after the instance faces a failure, it is very important for every DBA to understand how is it made […]

- myadmin

- Performance tuning

- 16 Comments

Why should we configure limits.conf for Oracle database?

Installing Oracle Database is a very common activity to every DBA. In this process, DBA would try to configure all the pre-requisites that Oracle installation document will guide, respective to the version and OS architecture. In which the very common configuration on UNIX platforms is setting up LIMITS.CONF file from /etc/security directory. But why should […]

- myadmin

- RMAN

- 9 Comments

Why RMAN needs REDO for Database Backups?

RMAN is one of the key important utility that every Oracle DBA is dependent on for regular day to day backup and restoration activities. It is proven to be the best utility for hot backups, in-consistent backups while database is running and processing user sessions. With all that known, as an Oracle DBA it will […]

- myadmin

- General topics

- 10 Comments

Will huge Consistent Reads floods BUFFER CACHE?

Oracle Database BUFFER CACHE is one of the core important architectural memory component which holds the copies of data blocks read from datafiles. In my journey of Oracle DBA this memory component played major role in handling Performance Tuning issues. In this Blog, I will demonstrate a case study and analyze the behavior of BUFFER […]

- myadmin

- Storage

- 11 Comments

Can a data BLOCK accommodate rows of distinct tables?

In Oracle database, data BLOCK is defined as the smallest storage unit in the data files. But, there are many more concepts run around the BLOCK architecture. One of them is to understand if a BLOCK can accommodate rows from distinct tables. In this article, we are going to arrive at the justifiable answer with […]

- myadmin

- Performance tuning

- 32 Comments

Can you really flush Oracle SHARED_POOL?

One of the major player in the SGA is SHARED_POOL, without which we can say that there are no query executions. During some performance tuning trials, you would have used ALTER SYSTEM command to flush out the contents in SHARED_POOL. Do you really know what exactly this command cleans out? As we know that internally […]